Organized by the new CSG Data Engineering & AI

Register for the NHR4CES Community Workshop 2026

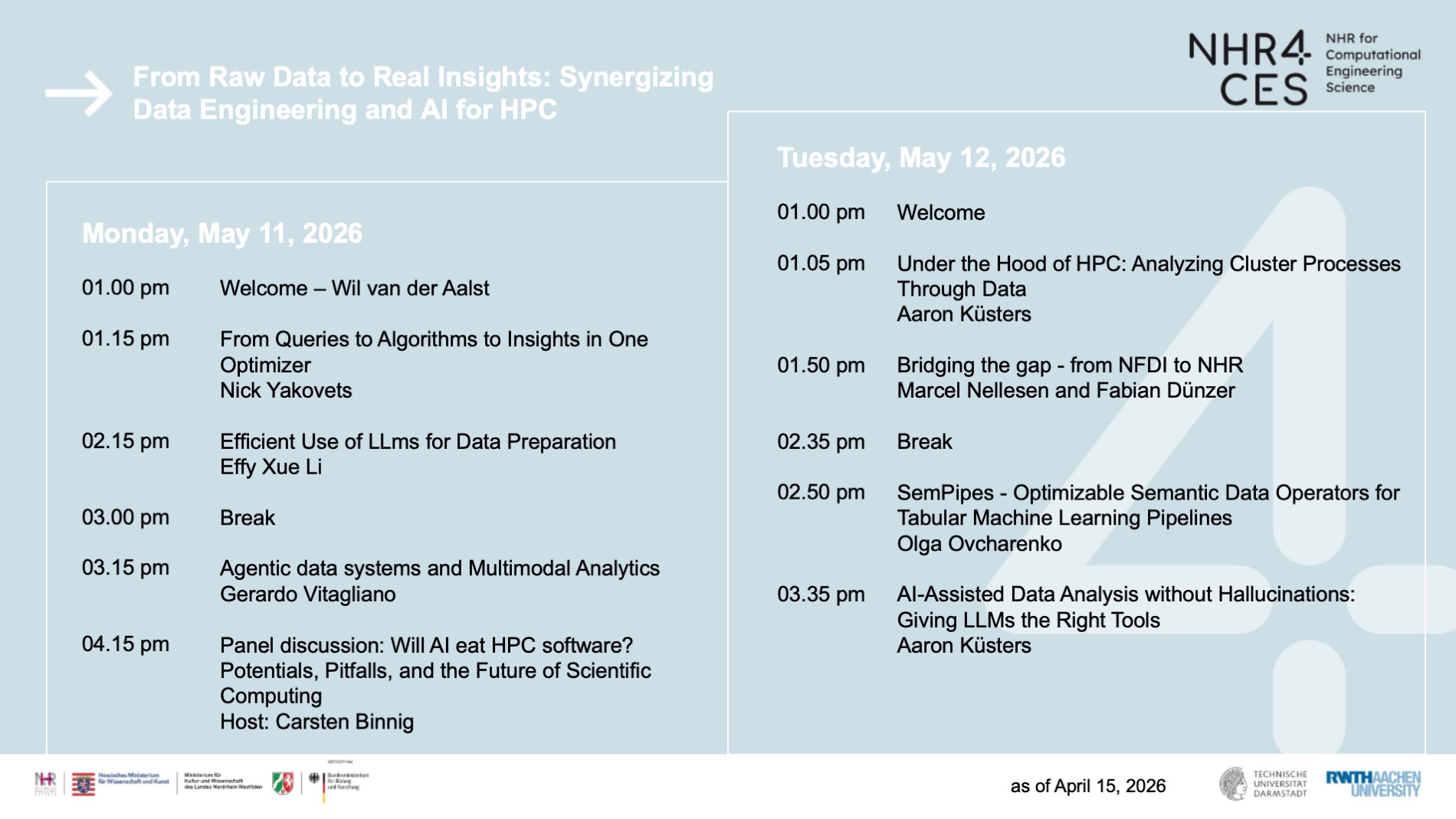

From Raw Data to Real Insights: Synergizing Data Engineering and AI for HPC

Date: May 11-12, 2026 - 01.00 pm - 05.00 pm

Online Workshop

Hosted by CSG Data Engineering & AI

With the continuous expansion of High-Performance Computing (HPC) capabilities, researchers are facing an unprecedented abundance of research data. To effectively harness this potential, isolated approaches are no longer sufficient; instead, a synergy of robust data management and advanced analytics is required. This workshop, organized by the CSG Data Engineering & AI, addresses the complete data lifecycle on HPC systems. We will explore how to bridge the gap between raw infrastructure and scientific

discovery by integrating Research Data Management (RDM), Automated Data Engineering, and Artificial Intelligence. The session will cover strategies ranging from workflows for ensuring data quality and storage, to applying Machine Learning and Process Mining for deeper analysis. By focusing on these intersecting disciplines, we aim to demonstrate how researchers can achieve scalable, reproducible results that adhere to FAIR principles, ultimately transforming raw data into real, actionable insights.

All about our speakers and talks!

Nick Yakovets

… is an Assistant Professor in the Department of Mathematics and Computer Science at Eindhoven University of Technology (TU/e). His research focuses on databases and data-intensive systems, with particular emphasis on foundations of databases, efficient data analytics, and engineering of high-performance data processing systems. Currently, he works on the design and implementation of core database technologies, management of massive graph data, and efficient processing of graph queries. He teaches courses in data modeling and databases, data management for data analytics, database technology, and data engineering.

Talk: From Queries to Algorithms to Insights in One Optimizer

Data system architectures are converging: storage in lakehouses, execution in embedded engines, querying in converged systems. Yet the optimizer still draws a hard line between queries it can plan and algorithms it cannot. Nowhere is this gap more visible than in graph analytics, where pipelines routinely chain queries with algorithms like PageRank and shortest paths across multiple engines, with serialization dominating data-access time. I argue that the optimizer is the next frontier in this convergence, and show how, through our work on AvantGraph at TU/e, cost-based optimization can be extended from pattern matching through recursive queries to graph algorithms. I introduce the concept of an optimizer-visible fragment, connecting this to the broader trend of architectural convergence and its implications for HPC and AI workloads.

Effy Xue Li

… is a Postdoctoral Researcher at the TRL Lab in the Database Architectures Group at CWI, the Netherlands. She completed her PhD at the University of Amsterdam, where her research focused on knowledge graph construction in complex domains and broader questions in data management, drawing on techniques from NLP, machine learning, and knowledge graphs. Previously, she was an AI Resident at Microsoft Research Cambridge and earned her MSc in AI from the University of Edinburgh.

Talk: Efficient Use of LLMs for Data Preparation

Data preparation accounts for 80% of a data scientist’s time and remains one of the least enjoyable yet essential tasks in the workflow. While LLMs offer new opportunities for automating structured data preparation, challenges persist in efficiency, scalability, and adaptability. In this talk, we explore the efficient use of LLMs for data preparation, including generating transformation code for data wrangling tasks and fine-tuning small LLMs for entity matching. We highlight how LLMs can be leveraged not just for reasoning but as scalable, cost-effective automation tools in data-cleaning pipelines. Finally, we discuss future research opportunities in the area, paving the way for more adaptable and interpretable AI-driven data science workflows.

Gerardo Vitagliano

… is a postdoctoral associate at the Data Systems Group of MIT CSAIL. He completed his doctoral studies at the Hasso Plattner Institute, with a thesis on structural representations of tabular data files. His main research goal is making data accessible to everyone: this includes an interests in multimodal data integration, optimizing the performance of LLM and agent-based systems, and assisting non-technical users in the design and deployment of complex data pipelines.

Talk: Agentic data systems and Multimodal Analytics

Over the past few years, retrieval-augmented generation (RAG) and LLM-based agents have rapidly evolved from simple document retrievers to complex architectures capable of orchestrating end-to-end data pipelines. This progress has opened the door to querying data in ways that blur the line between search, question answering, and database-like operations. In this talk, we cover some of the recent systems that incorporate optimization layers, dynamic retrieval, or reasoning strategies to query unstructured data. Yet, when moving from text-only corpora to multimodal datasets—where information may be stored as images, tables, or structured metadata—the challenges multiply: join-like reasoning across modalities, scaling over high-cardinality queries, and balancing precision with recall under context limits. To address these challenges, we present our vision for an analytical system over unstructured data. We highlight the open challenges for the design of such system and also in the often overlooked problem of benchmarking, presenting some of the most recent efforts on evaluating such systems on real-world pipelines data.

Carsten Binnig

… is a professor at TU Darmstadt and PI our CSG Data Engineering & AI. His main research focus is at the moment on the design of distributed and parallel data management systems for the next generation data center and enterprise cluster hardware. For example, high-speed RDMA-capable networks such as InfiniBand FDR/EDR used to be a very expensive technology that was only deployed in high-performance computing (HPC) clusters. However, InfiniBand has recently become cost-competitive with Ethernet and is becoming an interesting alternative for future data centers and enterprise clusters. Our initial results of building an InfiniBand-optimized system called I-Store show that this trend towards high-speed networks enables a new bread of distributed data management systems which lead to major performance gains compared to existing systems for analytical but also transactional workloads.

Panel discussion: Will AI eat HPC software? Potentials, Pitfalls, and the Future of Scientific Computing

As HPC systems continue to scale, so does the complexity of managing data, software, and workflows. At the same time, advances in AI, particularly in LLMs, are reshaping how software is written, optimized and maintained. This panel explores the question: Will AI fundamentally change, or even replace, how HPC software is developed and used?

Olga Ovcharenko

… is a PhD researcher at DEEM Lab (BIFOLD and TU Berlin), supervised by Sebastian Schelter. Her research is at the intersection of data management and machine learning. She focuses on leveraging large language models to improve machine learning pipeline development and enhance semantic query processing with multi-modal data. Olga earned a Master’s degree in Data Science from ETH Zürich, where she specialized in machine learning research and focused on multimodal single-cell data integration using self-supervised learning. She has also worked on system internals, for instance, Apache SystemDS. Olga is a member of the Apache SystemDS Project Management Committee and has published her research at ICML, IEEE VIS, CIKM, and SIGMOD.

Talk: SemPipes - Optimizable Semantic Data Operators for Tabular Machine Learning Pipelines

Machine learning on tabular data relies on complex data preparation pipelines that are costly to design and optimize. We introduce SemPipes, a declarative programming model that integrates LLM-powered semantic data operators into tabular ML pipelines. Semantic operators express data transformations in natural language, while execution is handled by a runtime system that synthesizes task-specific implementations based on data characteristics and pipeline context. Using LLM-based code synthesis guided by evolutionary search, SemPipes enables automatic optimization of data operations. Experiments across diverse tabular ML tasks show that SemPipes improves end-to-end predictive performance for both expert-designed and agent-generated pipelines, while reducing pipeline complexity.

Aaron Küsters

… holds a bachelor’s and masters’s degree in computer science from the RWTh Aachen University. Since 2024 he is pursuing an PhD in the PADS (Process and Data Science) research group of the RWTH Aachen University. In December 2024, he joined NHR4CES as part of the CSG Data Science and Machine Learning group.

Talk: Under the Hood of HPC: Analyzing Cluster Processes Through Data

HPC clusters power much of modern computational science, but what actually happens inside and how users interact with them at scale often stays hidden. In this talk, I'll show how data from schedulers like SLURM can be turned into structured event logs that make these inner workings visible. We'll look at how to extract such data in practice, why a single perspective (just jobs, or just nodes) rarely tells the full story, and how object-centric process mining helps tie the different pieces together. Along the way, we look at findings observed in real-life cluster data: From everyday job patterns to what the data looked like during an unplanned shutdown. Finally, we turn to some thoughts on where all of this could go, for operators making sense of their clusters, and for users getting more out of them.

Talk: AI-Assisted Data Analysis without Hallucinations: Giving LLMs the Right Tools

Large Language Models (LLMs) like ChatGPT can suggest analysis strategies and write fluent explanations. But when asked to compute a p-value or count events in a dataset, they confidently make up numbers. This makes naive "just ask the AI" approaches dangerous for any analysis that requires correctness. In this talk, I present an alternative: Strict tool chaining. Instead of generating code or computing results itself, the AI interacts with existing tools, just like we would. It decides what to compute, then delegates to verified tools (statistics or data science libraries, a process mining engine, visualization backends) that do the actual work. In a live demo, I show how a prototype AI copilot with pre-built, trusted tool calls produces correct, verifiable results where an unconstrained LLM would hallucinate. The talk closes with a discussion of when this works, where it breaks, and how the pattern generalizes beyond process mining.